Multimodal Monday #22: Spatial Crisis, Trust Bottleneck

Multimodal Monday #22: MLLMs fail basic rotations, Intern-S1 beats GPT on science, and MultiTrust-X exposes vulnerabilities. Trust rebuilds AI!

Week of August 18-24, 2025

Quick Takes (TL;DR)

• MLLMs can't tell left from right - Even GPT-5 and o3 fail at kindergarten-level tasks: distinguishing 90° from 270° rotations. Meanwhile, MCOUT proves we've been thinking about AI reasoning all wrong, ditching language for continuous thought vectors yields 8% better performance.

• Open-source just ate closed-source's lunch - Intern-S1's 241B parameter model beats GPT and Claude at molecular synthesis and scientific tasks, proving specialized beats generalized. The era of "bigger is better" might be ending.

• Trust is the trillion-dollar problem - MultiTrust-X reveals current MLLMs are ticking time bombs across safety, fairness, and privacy. The gap between capability and trustworthiness is so wide, we're building Ferraris with bicycle brakes.

🧠 Research Highlights

Multimodal Chain of Continuous Thought for Latent-Space Reasoning in Vision-Language Models

MCOUT ditches language-based reasoning entirely, instead using continuous hidden vectors that evolve iteratively, like thoughts without words. The approach achieves up to 8.23% accuracy improvements on challenging benchmarks by reasoning directly in multimodal latent space rather than forcing everything through the bottleneck of language tokens.

Why It Matters: This paradigm shift could revolutionize how AI systems understand and connect information across text, images, and audio, no translation required.

Links: Paper | Code

Learning to Steer: Input-dependent Steering for Multimodal LLMs

L2S introduces context-aware behavior modification that adapts to each specific input rather than applying blanket safety rules. The system uses a small auxiliary module to predict query-specific steering vectors, enabling nuanced responses, like knowing when to refuse harmful requests versus redirecting medical questions to professionals.

Why It Matters: Finally, AI safety that isn't one-size-fits-all, critical for deploying systems that can handle diverse real-world scenarios without being overly restrictive.

Links: Paper

RotBench: Evaluating Multimodal Large Language Models on Identifying Image Rotation

This embarrassingly simple benchmark asks models to identify if an image is rotated 0°, 90°, 180°, or 270°, and they spectacularly fail. Even GPT-5, o3, and Gemini-2.5-Pro can't reliably distinguish between 90° and 270° rotations, exposing a fundamental blind spot in spatial reasoning that no amount of prompting or fine-tuning can fix.

Why It Matters: If AI can't tell left from right, how can we trust it with autonomous driving, robotics, or any task requiring spatial awareness?

Links: Paper

SPANER: Shared Prompt Aligner for Multimodal Semantic Representation

SPANER creates truly unified semantic spaces where text, images, and audio cluster by meaning rather than modality using shared prompts as conceptual anchors. The framework is inherently extensible, new modalities plug in without architectural changes, achieving competitive few-shot retrieval while maintaining semantic coherence.

Why It Matters: This enables search that actually understands that a photo of a barking dog, the sound of barking, and the text "dog barking" are all the same concept.

Links: Paper

Unveiling Trust in Multimodal Large Language Models: Evaluation, Analysis, and Mitigation

MultiTrust-X benchmarks MLLMs across 32 tasks covering truthfulness, robustness, safety, fairness, and privacy, and the results are alarming. Current models show massive vulnerabilities, with multimodal training actually amplifying risks present in base LLMs, while most mitigation strategies create unexpected trade-offs that compromise utility.

Why It Matters: This research proves we're nowhere near deployment-ready for high-stakes applications, current MLLMs are brilliant but fundamentally untrustworthy.

Links: Paper

Can we Evaluate RAGs with Synthetic Data?

This study reveals that synthetic benchmarks work reasonably well for testing retrieval configurations but completely fail when comparing different generator architectures. The breakdown occurs because synthetic questions tend toward technical specificity while human questions are ambiguous and general, a mismatch that yields misleading evaluation results.

Why It Matters: Using synthetic data to evaluate RAG systems is like training for a marathon on a treadmill, it might help, but it won't prepare you for real terrain.

Links: Paper | GitHub

🛠️ Tools & Techniques

Intern-S1: A Scientific Multimodal Foundation Model

Intern-S1 packs 241B parameters (28B activated) trained on 5 trillion tokens, with half specifically from scientific domains, achieving SOTA performance in molecular synthesis and crystal stability prediction. The model uses Mixture-of-Rewards to train on 1000+ tasks simultaneously, surpassing closed-source models in specialized scientific applications.

Why It Matters: Open-source just proved it can beat Big Tech at their own game, when you focus on doing one thing exceptionally well.

Links: Paper | HuggingFace | GitHub

ElevenLabs launches Eleven Music API (August 18, 2025)

ElevenLabs expanded from voice to music with the first commercially-licensed AI music generation API, already powering VEED, AMP, and Hedra Labs. The API generates both vocal and instrumental tracks across any genre from text descriptions, with full commercial rights cleared through partnerships with labels and artists.

Why It Matters: AI-generated content just went mainstream, this isn't a demo, it's production-ready infrastructure with real customers and proper licensing.

Links: Blog Post | Announcement

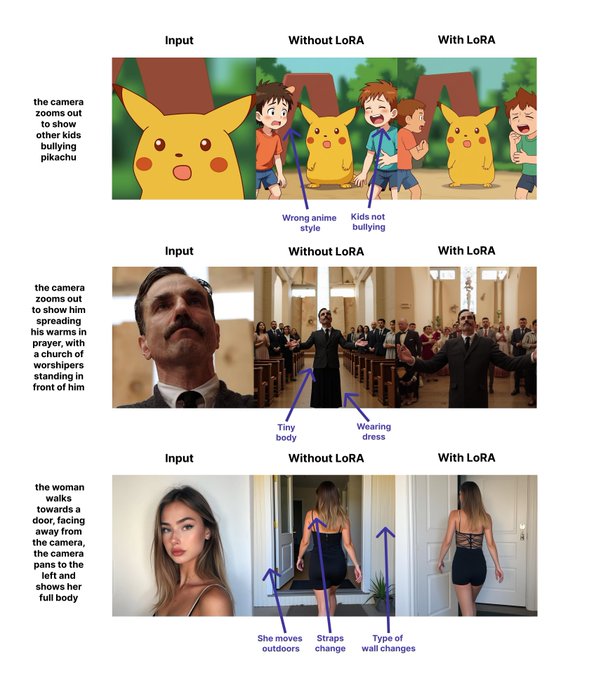

InScene: Flux Kontext LoRA for Scene Consistency

InScene solves the holy grail of AI image generation, maintaining scene consistency across multiple shots, using just 394 carefully curated image pairs. The LoRA enables variations while preserving backgrounds, characters, and visual styles, with 4,432 downloads last month alone proving its practical utility.

Why It Matters: Finally, AI that can generate a coherent visual story instead of random disconnected images.

Links: HuggingFace | Announcement | Dataset

SAM 2 and MatchAnything Integration into HuggingFace Transformers

Meta's SAM 2 brings segment-anything capabilities to video with frame-to-frame object tracking, while MatchAnything enables robust cross-modal visual matching. Both now integrate seamlessly with HuggingFace Transformers, complete with optimized inference pipelines and comprehensive documentation.

Why It Matters: State-of-the-art computer vision just became as easy to use as from transformers import SAM2 democratization at its finest.

Links: SAM 2 Integration | MatchAnything Integration

📈 Trends & Predictions

The Spatial Reasoning Crisis: Why MLLMs Can't Tell Left from Right

RotBench's revelation that even GPT-5 can't distinguish 90° from 270° rotations isn't just embarrassing, it's existential. This fundamental inability to understand spatial relationships exposes a critical flaw in how vision-language models process visual information. They're pattern matchers, not spatial reasoners.

The implications are staggering. Autonomous vehicles need to understand that a "turn left" sign rotated 180° means something entirely different. Robotic systems need spatial awareness to manipulate objects. AR/VR applications require understanding of 3D space and orientation. Yet our most advanced models fail at tasks a toddler could handle.

This suggests we need entirely new architectures—perhaps specialized spatial reasoning modules or fundamentally different approaches to visual encoding. The current transformer-based architectures might have hit a wall that no amount of scaling can overcome. Expect to see hybrid systems emerging that combine general multimodal models with specialized spatial reasoning components, similar to how the human brain has distinct regions for spatial processing.

Trust: The Trillion-Dollar Bottleneck

MultiTrust-X's comprehensive evaluation reveals an uncomfortable truth: we've built Formula 1 race cars with no brakes. Current MLLMs are incredibly capable but fundamentally untrustworthy across every critical dimension, safety, fairness, privacy, robustness, and truthfulness.

This trust gap is becoming the primary barrier to commercial deployment. Enterprises won't adopt systems that might hallucinate financial advice, generate biased hiring recommendations, or leak private information. The liability risks are astronomical. One high-profile failure could set the entire industry back years.

The solution won't come from post-hoc patches or safety fine-tuning. We need trustworthiness built into the architecture from day one. Expect to see new model designs that prioritize verifiability over capability, systems that can explain their reasoning in auditable ways, and architectures that enforce safety constraints at the computational level rather than through training.

The winners in the multimodal AI race won't be those with the highest benchmark scores, they'll be those who first achieve "boring" reliability. The company that builds the Toyota Camry of AI (reliable, safe, predictable) will capture more value than those building AI Ferraris that occasionally drive off cliffs.

🧩 Community + Shoutouts

Reality Check Award - Massive props to the RotBench team for having the audacity to ask "can AI identify rotated images?" and exposing that our emperor has no clothes. Sometimes the simplest questions reveal the biggest problems.

Open Source Heroes - Intern-S1 team deserves a standing ovation for proving open-source can compete with, and beat, Big Tech's closed models. This isn't just a technical achievement; it's a victory for accessible AI.

Builder Spotlight - Shoutout to @fofrAI for their GPT-5 + ffmpeg video editor that actually works, from basic cuts to complex transformations via natural language.

Also props to @xenovacom for the WebGPU-accelerated DINOv3 demo proving browser-based computer vision isn't just possible, it's fast.

That's a wrap for Multimodal Monday #22! This week proved that bigger isn't always better, trust is harder than intelligence, and somehow, we've built AI that can write symphonies but can't tell if a picture is sideways.

Ready to build multimodal solutions that actually work? Let's talk