Multimodal Monday #45: Birds, Whales, and the End of Latency

Your Weekly Multimodal AI Roundup (Feb 9 - Feb 16)

Quick Take (TL;DR)

- Voice AI drops the walkie-talkie act. NVIDIA's PersonaPlex-7B and ElevenLabs' Expressive Mode both ship full-duplex conversation. The AI listens while it talks, interrupts naturally, and adjusts tone mid-sentence. Turn-taking latency is dead.

- Vision goes native. Qwen3.5 (397B parameters) and DeepGen 1.0 bake visual understanding directly into the model architecture instead of wiring a vision encoder to a language model after the fact. The result: tighter reasoning over charts, documents, and complex images.

- A bird model decoded whale songs. Google fine-tuned Perch 2.0 (trained on birdsong) to classify whale vocalizations. It worked, which means bioacoustic signals share deeper structural patterns than anyone expected.

Tools, Models and Techniques

Qwen3.5-397B-A17B - Qwen's new foundation model pairs a 397B-parameter vision-language architecture with hybrid linear attention heads. It handles document parsing, chart analysis, and visual reasoning natively rather than routing through a separate encoder. Why it matters: An open model at this scale with native multimodal integration puts serious pressure on proprietary alternatives. Blog | Hugging Face

PersonaPlex-7B - NVIDIA released a 7B voice model that listens and speaks at the same time. It supports natural interruptions ("barge-in"), overlapping speech, and real-time turn negotiation without the pause-wait-respond loop. Why it matters: Full-duplex conversation removes the single biggest friction point in voice AI: latency. Hugging Face

ElevenAgents Expressive Mode - ElevenLabs added breath, pauses, and emotional inflection to their voice agents. The output sounds less like text-to-speech and more like someone actually thinking before they talk. Why it matters: Voice agents in support, coaching, and companionship roles need to sound like they care, and this gets closer. Blog | Try it

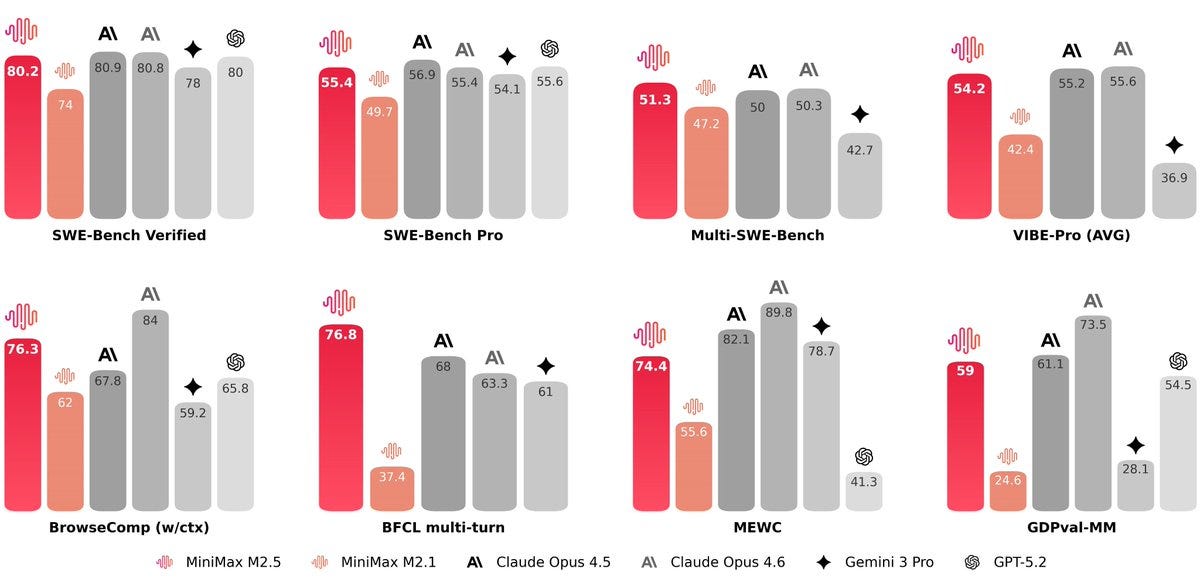

MiniMax M2.5 - MiniMax open-sourced a frontier model tuned for practical work: coding, writing, and structured analysis. It prioritizes instruction-following accuracy over open-ended chat. Why it matters: A model built to execute tasks reliably matters more than one that chats well. Hugging Face

Seedance 2.0 - ByteDance's video generator takes text, images, audio, or existing video as input and produces new video synchronized to the audio beat. It automates the tedious frame-by-frame alignment work that eats hours in post-production. Why it matters: Audio-visual sync is the bottleneck in short-form video production, and this removes it. Project Page

- Qwen-Image-2.0: Professional infographics and photorealism generation. Blog

- DeepGen 1.0: A lightweight 5B-parameter unified multimodal model. Hugging Face

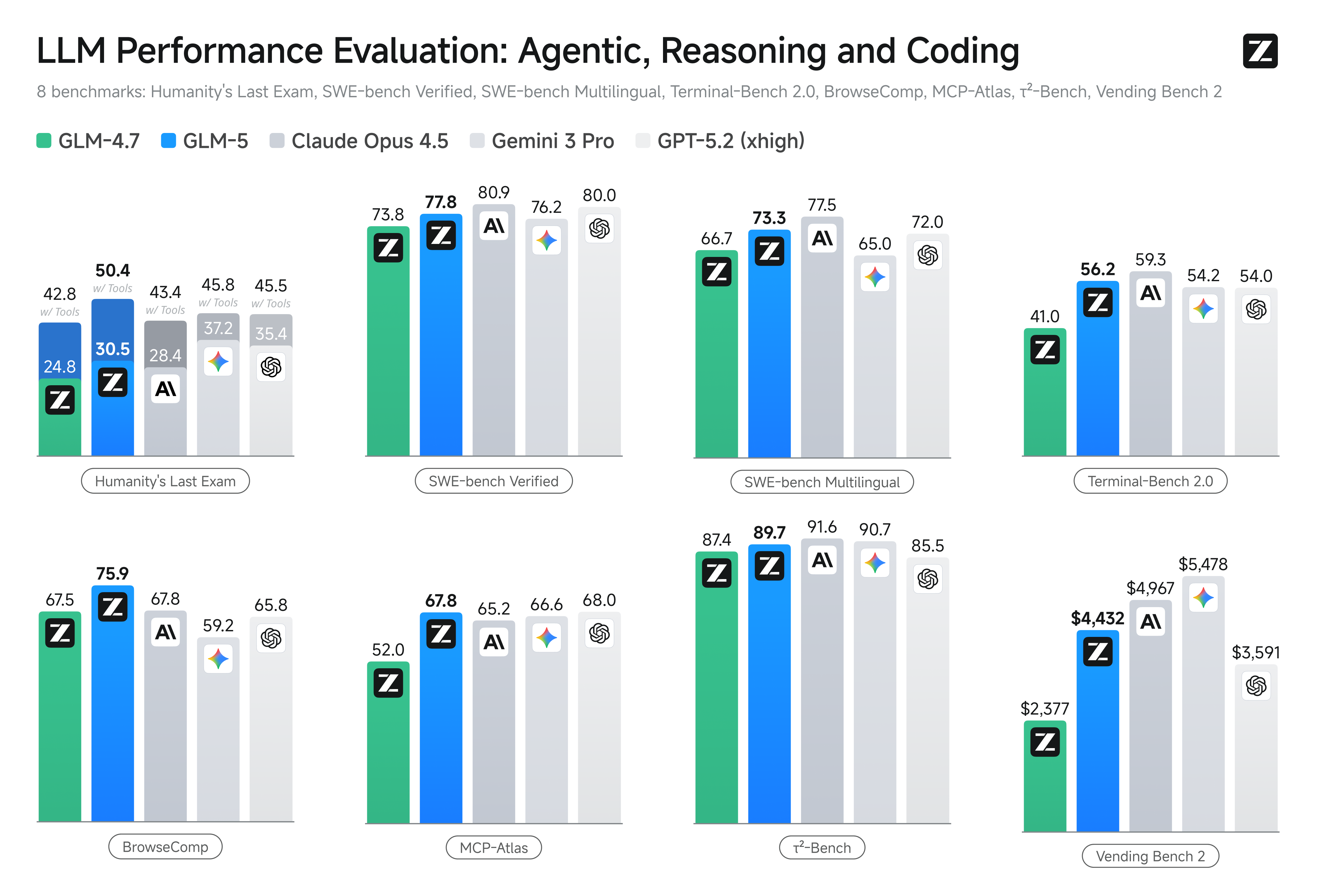

- GLM-5: From vibe coding to agentic engineering. Blog

- KaniTTS2: Open-source 400M TTS model that runs in 3GB VRAM. Hugging Face

- SoulX-Singer: High-quality zero-shot singing voice synthesis. GitHub

- MioTTS-2.6B: Lightweight TTS optimized for speed in English and Japanese. Hugging Face

- FireRed-Image-Edit-1.0: New tool for image editing. Hugging Face

- Qwen3-TTS: 1.7B parameters of clean, natural speech synthesis. Hugging Face

- Ming-flash-omni 2.0: New multimodal model from InclusionAI. Hugging Face

Research Highlights

EchoJEPA: Latent Prediction for Hearts - A self-supervised foundation model trained on 18 million echocardiograms. Instead of predicting noisy ultrasound pixels, it learns in latent space and separates clinical signal from artifact, outperforming existing cardiac assessment methods. Why it matters: Self-supervised training on massive unlabeled medical data catches anomalies that small labeled datasets miss. Paper

Bioacoustics Transfer Learning - Google Research adapted Perch 2.0, trained entirely on bird songs, to classify whale vocalizations. The cross-domain transfer worked because bioacoustic signals share fundamental spectral and temporal features across species. Why it matters: You can train on abundant data (birds) and fine-tune for scarce data (whales), which unlocks conservation research without needing millions of labeled samples per species. Blog

Beyond the Unit Hypersphere - This paper challenges the standard practice of normalizing embeddings onto the unit hypersphere in contrastive learning. The authors show that embedding magnitude carries meaningful information about confidence and specificity that normalization destroys. Why it matters: Preserving magnitude leads to more nuanced retrieval and better performance on ambiguous queries. Paper

DuoGen: Mixed-Media Storytelling - NVIDIA's DuoGen generates coherent interleaved sequences of images and text. It decides when to show and when to tell, keeping visual and textual content consistent across the full narrative. Why it matters: This opens the door to AI-generated tutorials, articles, and illustrated content that reads as authored rather than assembled. Project Page

UniAudio 2.0 - A single audio language model that handles speech, music, and sound effects through text-aligned factorized tokenization. One framework generates, edits, and mixes across all audio types without switching models. Why it matters: Unifying the audio stack (TTS, music generation, foley) into one model creates workflows that were previously impossible without multiple specialized tools. Paper

- ALIVE: Lifelike audio-video generation. Project Page

- ConsID-Gen: View-consistent, identity-preserving image-to-video generation. Project Page

- JUST-DUB-IT: Video dubbing via joint audio-visual diffusion. Project Page

- Voice-First Human-AI Collaboration: Exploring LMMs in mixed reality. Paper

- Multimodal Manufacturing Safety Chatbot: Benchmark for RAG approaches in safety. Paper

- Alzheimer's Detection: Multimodal fusion for better diagnosis. Paper

Trends & Predictions

Full-Duplex Voice Is Here

PersonaPlex-7B and ElevenAgents both shipped full-duplex voice this week. The "you talk, then I talk" model is officially legacy.

Real conversations overlap. People interrupt, confirm with "uh-huh," and change direction mid-thought. Full-duplex models handle all of this. More importantly, continuous listening lets the model start composing a response before you finish your sentence. That shaves hundreds of milliseconds off response time, which matters in customer support, gaming, and any scenario where hesitation breaks trust. And when the model hears frustration in your voice while you're still talking, it can adjust its response before delivering it.

Native Multimodal Architectures Are Winning

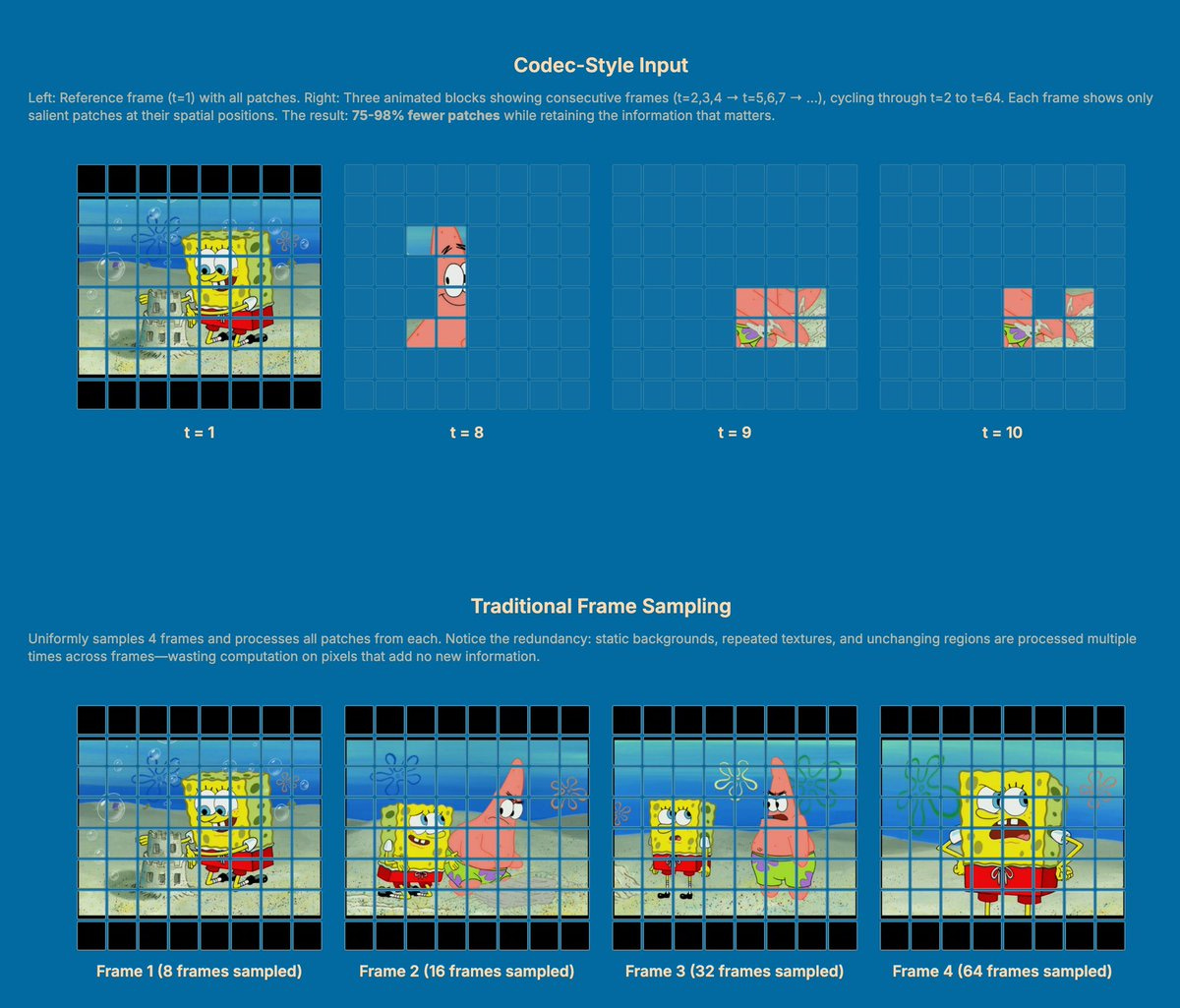

Qwen3.5 and DeepGen 1.0 both build vision into the model from the ground up. No separate encoder. No adapter layer. No translation step. When vision and language train together from scratch, the model reasons with visual information instead of converting it to text first. You get a system that reads a chart and understands the argument the chart is making, not just the numbers on it. Unified architectures also cut inference overhead because data doesn't bounce between modules. This is what enables tasks like "analyze this graph in the context of the surrounding report" where tight cross-modal reasoning is the whole point.

Community + Shoutouts

- Larry the OpenClaw: Shoutout to @oliverhenry for the writeup on Larry, the open-source robot arm doing social media. A fun look at embodied AI in the wild. X Post

- OneVision Encoder: Thanks to @brian_bo_li for the deep dive into the OneVision Encoder. Understanding the "eyes" of these models is crucial for building better apps. X Post

- AutoGuidance Node: A great resource for the ComfyUI community: a custom node implementing AutoGuidance. GitHub

- Kling 3.0 Fun: @lexx_aura shows off the capabilities (and hilarity) of Kling 3.0. Sometimes the best way to test a model is to just make something weird. X Post

That's a wrap for Multimodal Monday #45! From full-duplex voice models that listen and speak simultaneously, to 397B-parameter architectures that reason with pixels instead of converting them to words, to a birdsong classifier that turned out to understand whales, this week showed multimodal AI getting less polite and more useful.

Ready to build multimodal solutions that actually work? Let's talk